The ability of computer vision to analyze data in real time and understand what’s in an image will help create new CONOPS. (AeroVironment photo)

Adding these technological capabilities to robotic systems of all sorts will completely change the way analysts work with sensor feeds and images. We discussed this boon to autonomy with AeroVironment’s Scott Newbern, vice president and chief technology officer, and Timothy Faltemier, vice president and general manager of Learning and Active Perception (LEAP).

Breaking Defense: Before we talk about computer vision and how it reduces cognitive load for operators through autonomy, first please define computer vision.

Timothy Faltemier is vice president and general manager of Learning and Active Perception at AeroVironment.

Faltemier: The best way to think about computer vision is by looking at how humans analyze data. We see the objects in a picture and where they are. That picture is worth a thousand words, and computer vision is trying to create a thousand words from that picture.

The capability has grown over the past 10 years from initially being able to find a dog in a baseball field to now truly getting to the point where we can say a thousand words about that image. It’s not just the dog, but the kind of dog. Is the dog running? Where’s the dog pointing relative to the camera? Is there a kid in the background and does the kid have a baseball? Is that baseball being thrown?

Computer vision is trying to instantaneously look at that picture and summarize it in a way humans can understand.

Breaking Defense: How are those thousand words of description presented to the user? How does it reduce their cognitive load?

Faltemier: To summarize it in a nutshell, how do I take the operator from being someone who must watch the different sensor feeds and images to someone who can ask questions about the sensor feed or images?

Think of a casino. A casino has a thousand cameras. For years they’ve had an army of people watching every one of those cameras for whatever things they were told to watch for, be it cheats or high-value individuals.

What we do is look at all thousand cameras at the same time and set conditions or alerts that would say, for example, if the operator was interested in every time that Scott Newbern walked through the casino, please alert this particular hostess because he’s a high-value individual.

That’s the kind of technology that we’re providing that reduces cognitive load. Think of the TSA. They’re watching constantly, nonstop, every single bag that goes through that scanner. What we want to do is have TSA be alerted whenever the system thinks they’ve found something, so they can be either monitoring more lines concurrently or doing a much deeper dive into ones that the system is unsure of.

The government is investing in new and different sensors – everything from satellites to UAVs and USVs. All of the data collected by those platforms is becoming available. The more that we can turn it over to computer vision-based perception systems, the more we can start to get away from every system being stove-piped and holding their data, to one that is now a leveraged network across different systems.

Our ability to analyze that data in real time and to understand what’s in there will only bring us new CONOPS that we would never have imagined before.

Breaking Defense: Where does perceptive autonomy fit in?

Faltemier: That’s where we think the next generation is. Again, back to the casino example, we’re sitting back and passively watching all these cameras. We can’t do anything other than report on what we have seen.

Perceptive autonomy is the next mountain to climb, which says if a human found something in that scene, what would the human’s physical actions be? Here’s what I mean. In the show CSI, for example, a crime would be committed during a storm at night. By happenstance, there’s a camera looking directly at the perpetrator’s license plate, but it was 100 yards away and they couldn’t see that information. In the magic of CSI, they say, ‘Zoom enhance’ and they can pinch and grab and see the license plate.

In reality that doesn’t exist. But what a human would do if the human was there during that time would be to take the controls of the camera and zoom in to see the license plate. Then you can zoom out and track the car.

Perceptive autonomy is taking the actions that a human would do based on the information provided by computer vision. That net effect makes you a system that is 10 or 100 times better than just the individual computer-vision component alone, because now you can see things that you couldn’t see before.

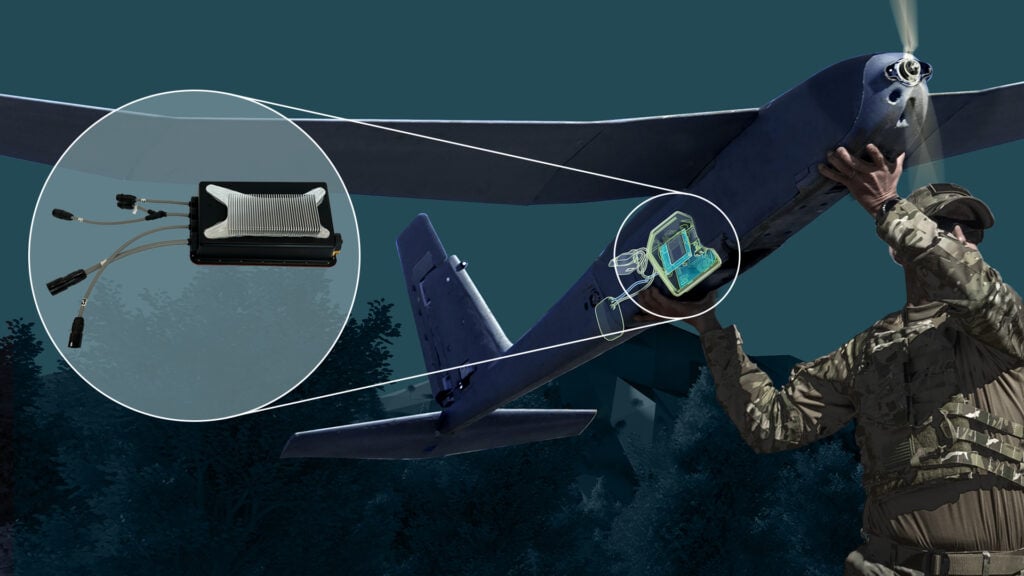

Computer vision and perceptive autonomy can automatically handle the tracking while the analyst does the analyzing. (AeroVironment photo)

Breaking Defense: In what ways does AV’s autonomous capabilities accelerate OODA loops?

Scott Newbern is vice president and chief technology officer at AV.

Newbern: I will put this in the context of our recent product release of AV’s Autonomy Retrofit Kit (ARK) and AVACORE software. These are ways to deploy an autonomy framework that not only supports all the products across AV’s families of systems and our business segments but also has the potential to add autonomy to any platform.

It’s an open framework for the rapid development and adoption of new behaviors such as AV’s perceptive autonomy. It allows you to connect multiple radios and disparate systems and enable platforms that didn’t have autonomy before to close the OODA loop faster.

There’s a lot of talk about the command-and-control network deploying autonomy. But in most cases, the real autonomy is dependent on the platform itself, and it’s inherent in that system. A lot of systems aren’t architected in a way to do that.

Our recent framework deployment in ARK allows us to deploy autonomy in those platforms onboard, on the edge.

Breaking Defense: How does a solution like ARK stand out in the field?

Newbern: There are not a lot of other solutions in the field, so it’s tough to do that comparison. There are some government architectures and platforms that have some similarities, but not a lot being used downrange.

One of the critical features that helps drive where we’re headed is our experience. We’re a 53-year-old technology company and pioneer of uncrewed systems. We stand behind our reputation of having things do what we say they’re going to do with a high level of integration. As we are architecting this system, we’re relying on that basis and philosophy in deployment.

Beyond that it is, again, a core architecture and an open agnostic enabler for our platforms and other platforms across multiple domains. We talk a lot about aircraft because that’s most of our heritage, but the architecture can deploy across surface and under-surface vessels, as well as uncrewed ground vehicles.

We have our autonomy architecture and applications like SPOTR-Edge – AV’s computer-vision software for onboard detection, classification, localization, and tracking of operationally relevant objects – but at its core, the framework is an open framework and can host either government-developed autonomy capabilities or other companies’ algorithms.

Another distinguishing feature is that it’s represented by both hardware and software. As mentioned, for a platform that doesn’t have these kinds of capabilities, this is an enabler. You could add it to another system, and now you have an edge capability onboard that gives you SPOTR-like features and other behavioral autonomy.

Breaking Defense: How specifically does what we’ve been discussing speed up the OODA loop?

Faltemier: Because we are doing processing onboard each one of those assets, the amount of time that is taken for the autonomy system to decide is vastly reduced.

Let’s take a concrete example. On a Switchblade today is a camera and a radio, and the pilot on the ground tells it to go to a certain area. They see the target visually on their ground station, they click on that target, and then they continue to let the system know that is the target they’re interested in. It’s a great use case and it works well.

One of the challenges with that use case, though, is the amount of time that it takes to get the imagery from that platform back to the human user. What we can do from the computer vision side is to automate that.

Rather than sending back an image and saying, ‘Human, click on the target,’ we can automatically click on the target for them and let the human sit back and review the answers that the computer has done so you don’t have that delay in reaction time. The system already understands what the target is and is doing its job to track it. The human is just seeing if anything is wrong or if they want to do anything different.

That has taken the responsibility and the hard cognitive load of having to make sure that the target is constantly centered. This frees them up to look at potential other targets and things of interest in the scene because they don’t have to focus 100 percent of their attention on that exact task.

Newbern: Or even not look at the scene. If you go to the OODA loop – observe, orient, decide, act – you can potentially take the operator out of all of that. The observe, orient, and decide piece become almost independent in the platform itself, and you can leave the decide and act portion to the role of the operator, or not.

Faltemier: Where that is a benefit and where things are going, in our opinion, is right now we fly a Puma to do ISR over an area. That’s a great use case, and the human is watching that video feed as it’s coming down and they’re making decisions about what they want to do next.

What if we were flying 10 Pumas, but you still only had one individual? In theory, if nothing else changed, you could cover 10 times the area completely mapping out that space. And only when the operator asks to be notified when a condition is met, are they having to take action.

That’s the benefit of having the computer vision-based perceptive autonomy onboard, as it’s effectively doing the bidding, the commander’s intent for the operator, and just reporting up and changing the role from an imagery analyst to an imagery reviewer.

Breaking Defense: I understand AV will be discussing computer vision, perceptive autonomy, and the work it’s doing to speed up the OODA loop during a webinar on June 19 after the MOSA Industry & Government Summit.

Newbern: I’ll be hosting a panel of experts to discuss how autonomy and AI are giving our warfighters and allies an advantage at the tactical edge today. They will talk about how the landscape of global conflict is rapidly changing and how technologies are evolving across this accelerated battlefield – on land, in air, and at sea.

Our panel will be moderated by Bryan Clark from the Hudson Institute and include Tim discussing computer vision, along with Dominik Wermus from Johns Hopkins University Applied Physics Laboratory and Matt Turek from DARPA. Registration is complimentary.