The military is weighing heavily how to use AI. (Graphic by Breaking Defense, original images via Getty and DVIDS)

WASHINGTON — Thirteen months after the State Department rolled out its Political Declaration on ethical military AI at an international conference in the Hague, representatives from the countries who signed on will gather outside of Washington to discuss next steps.

“We’ve got over 100 participants from at least 42 countries of the 53,” a senior State Department Official told Breaking Defense, speaking on background to share details of the event for the first time. The delegates, a mix of military officers and civilian officials, will meet at a closed-door conference March 19 and 20 at the University of Maryland’s College Park campus,

“We really want to have a system to keep states focused on the issue of responsible AI and really focused on building practical capacity,” the official said.

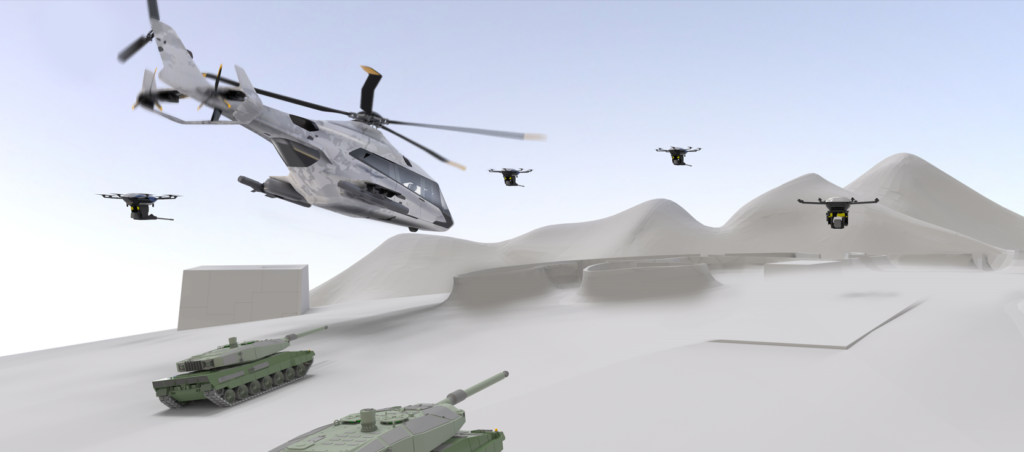

On the agenda: every military application of artificial intelligence, from unmanned weapons and battle networks, to generative AI like ChatGPT, to back-office systems for cybersecurity, logistics, maintenance, personnel management, and more. The goal is to share best practices, discuss models like the Pentagon’s online Responsible AI Toolkit, and build their personal expertise in AI policy to take home to their governments.

That cross-pollination will help technological leaders like the US refine their policies, while also helping technological followers in less wealthy countries to “get ahead of the issue” before investing in military AI themselves.

This isn’t just a talking shop for diplomats, the State official emphasized. Next week’s meeting will feature a mix of military and civilian delegates, with the civilians coming not just from foreign ministries but also the independent science & technology agencies found in many countries. The very process of organizing the conference has served a useful forcing function , the official said, simply by requiring signatory countries to figure out who to send and which agencies in their governments should be represented.

State wants this to be the first of an indefinite series of annual conferences hosted by fellow signatory states around the world. In between these general sessions, the State official explained, smaller groups of like-minded nations should get together for exchanges, workshops, wargames, and more — “anything to build awareness of the issue and to take some concrete steps” towards implementing the declaration’s 10 broad principles [PDF]. Those smaller fora will then report back to the annual plenary session, which codify lessons, debate the way forward, and set the agenda for the coming year.

RELATED: How GIDE grows: AI battle network experiments are expanding to Army, allies and industry

“We value a range of perspectives, a range of experiences, and the list of countries endorsing the declaration reflects that,” the official said. “We’ve been very gratified by the breadth and depth of the support we’ve received for the Political Declaration.

“53 countries have now joined together,” the official said, up from 46 (US included) announced just a few months ago in November. “Look carefully at that list: It’s not a US-NATO ‘usual suspects’ list.”

The nations who’s signed on are definitely diverse. They include core US allies like Japan and Germany; more troublesome NATO partners Turkey and Hungary; wealthy neutrals like Austria, Bahrain, and Singapore; pacifist New Zealand; wartorn Ukraine (which has experimented with AI-guided attack drones); three African nations, Liberia, Libya, and Malawi; and even minuscule San Marino. Notably absent, however, are not only the Four Horsemen that have long driven US threat assessments — China, Russia, Iran, and North Korea — but also infamously independent-minded India (despite years of US courtship on defense), as well as most Arab and Muslim-majority nations.

That doesn’t mean there’s been no dialogue with those countries. Last November, just weeks apart, China joined the US in signing the broader Bletchley Declaration on AI across the board (not only military) at the UK’s AI safety summit, and Chinese President Xi Jinping agreed to vaguely defined discussions on what US President Joe Biden described after their summit in California as “risk and safety issues associated with artificial intelligence.” Both China and Russia participate in the regular Geneva meetings of the UN Group of Government Experts (GGE) on “Lethal Autonomous Weapons Systems” (LAWS), although activists aiming for a ban on “killer robots” say those talks have long since stalled.

RELATED: Ethical Terminators, or how DoD learned to stop worrying and love AI: 2023 Year in Review

The State official took care to say the US-led process wasn’t an attempt to bypass or undermine the UN negotiations. “Those are important discussions, those are productive discussions, [but] not everyone agrees,” they said. “We know that disagreements will continue in the LAWS context — but I don’t think that we are well advised to let those disagreements stop us, collectively, from making progress where we can” in other venues and on other issues.

Indeed, it’s a hallmark of State’s Political Declaration — and the Pentagon’s approach to AI ethics, from which it draws — that it addresses not just futuristic “killer robots” and SkyNet-style supercomputers, but also other military uses of AI that, while less dramatic, are already happening today. That includes mundane administration and industrial applications of AI, such as predictive maintenance. But it also encompasses military intelligence AIs that help designate targets for lethal strikes, such as the American Project Maven and the Israeli Gospel (Habsora).

All these various applications of AI can be used, not just to make military operations more efficient, but to make them more humane as well, US officials have long argued. “We see tremendous promise in this technology,” the State official said. “We see tremendous upside. We think this will help countries discharge their IHL [International Humanitarian Law] obligations… so we want to maximize those advantages while minimizing any potential downside risk.”

That requires establishing norms and best practices “across the waterfront” of military AI, the US government believes. “It’s important not to estimate the need to have a consensus around how to use even the back office AI in a responsible way,” the official said, “such as [by] having international legal reviews, having adequate training, having auditable methodologies….These are fundamental bedrock principles of responsibility that can apply to all applications of AI, whether it’s in the back office or on the battlefield.”

![The sights from the 2024 Farnborough Airshow [PHOTOS]](https://centurionpartnersgroup.com/wp-content/uploads/2024/07/IMG_8722-scaled-e1721930652747-1024x577-hZjwVb-500x383.jpeg)